Most of us learned to size up companies the same way. Revenue, earnings, the line on a chart that goes up and to the right. That mental shortcut has served retail investors fine for a generation.

It just stopped working.

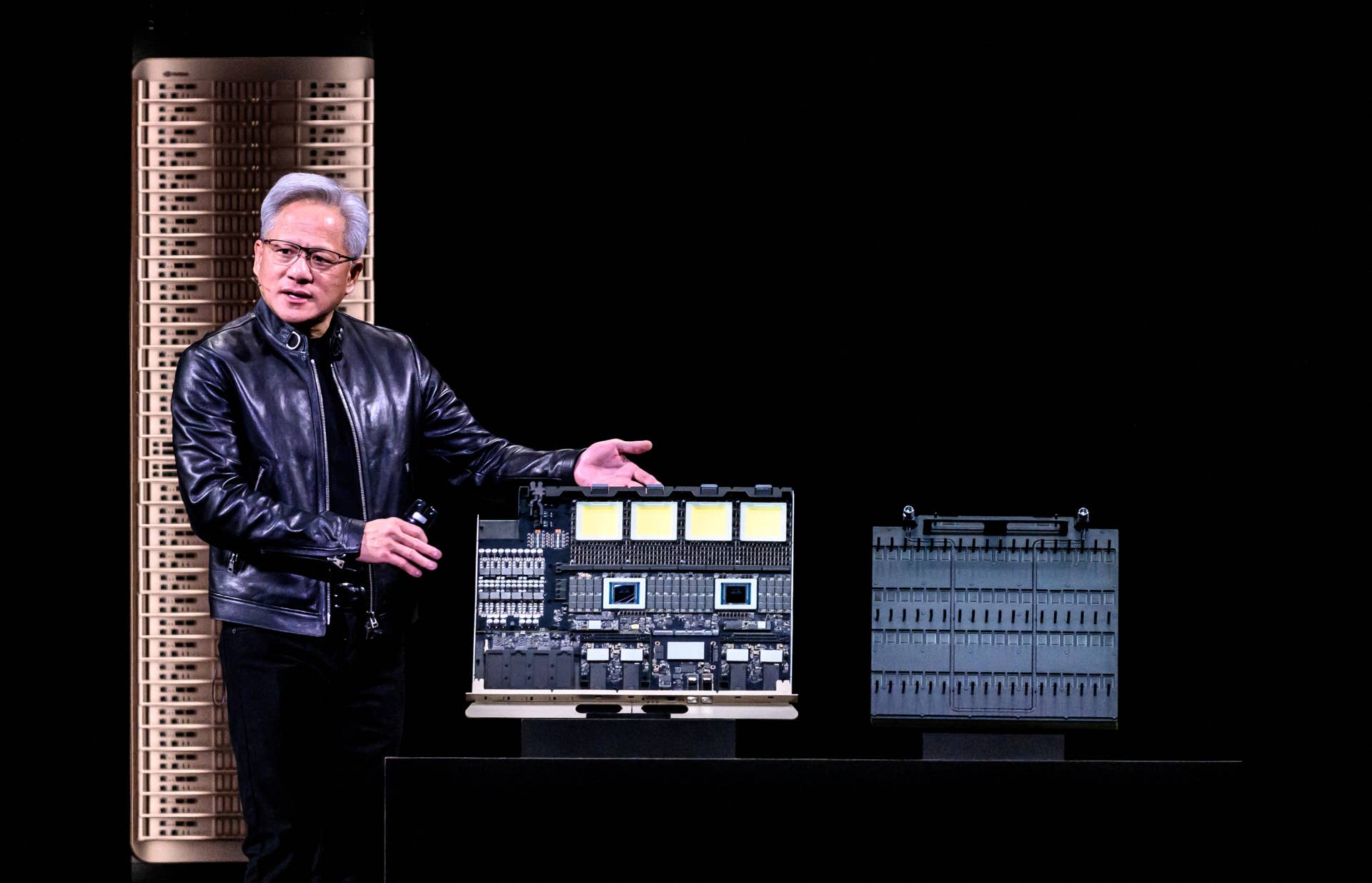

The biggest piece of the U.S. stock market is now a single chipmaker whose business model rests on something most people cannot picture, the act of “compute.” Nvidia (NVDA) closed on May 1 with a market value near $4.8 trillion, a level that puts the Santa Clara company ahead of the entire annual output of every economy on earth except the United States, China and Germany, according to International Monetary Fund projections for 2026.

That kind of valuation makes people want a simple answer to a simple question, which is whether the AI buildout still has fuel in the tank. The CEO of the company at the heart of it all just gave one.

Jensen Huang sat down live with CNBC on Tuesday, May 5, from ServiceNow’s (NOW) Knowledge 2026 conference in Las Vegas. “The compute needed for agentic AI has increased 1,000% compared to generative AI” in just two years, reported Fortune, citing his remarks alongside the broadcast.

Why the agentic AI shift is a different beast than chatbots

Generative AI is the kind most readers already touch every day. You type a prompt into ChatGPT, the model burns through some tokens, you get an answer, and you move on with your afternoon.

Agentic AI is another animal. Agents read, plan, call tools, write code, query databases, and check their own work. They string those steps together for minutes or hours at a time, often without a human in the loop, and each step consumes more compute than a single chatbot reply ever did.

Related: Bank of America reassesses Nvidia stock, sets new forecast

Each agent run can involve dozens of model calls, hundreds of tool invocations, and thousands of intermediate tokens before it finishes one business task. That is not a marginal increase. That is a different cost structure for the underlying data center, and it is the entire reason hyperscalers like Microsoft (MSFT), Meta (META), Amazon (AMZN) and Alphabet (GOOGL) keep raising capital expenditure budgets even as investors push back on the payback timeline.

When I look at the spending arc, the math finally squares. The four largest cloud providers have collectively committed more than $200 billion in AI infrastructure capex for 2026 alone, much of it routed straight to Nvidia’s data center products. The agents need somewhere to live and something to think with.

More Nvidia:

- Nvidia is losing an industry that saved it from bankruptcy

- Nvidia CEO makes surprising admission on OpenAI and Anthropic

- Goldman Sachs just found a reason to like Nvidia stock again

What Huang’s compute math means for Nvidia investors

Speaking alongside ServiceNow Chairman and CEO Bill McDermott on May 5, Huang made the bull case for the next phase of the AI cycle as plainly as he ever has.

“This is one of the greatest transformations for the software industry ever,” Huang told CNBC live from the Venetian.

The numbers behind that line are doing real work. Some of the headline figures investors are tracking right now:

- Nvidia delivered $215.94 billion in fiscal 2026 revenue, a 65% jump from a year earlier, according to Yahoo Finance.

- Q4 fiscal 2026 revenue alone hit $68.1 billion, up 73% from a year earlier, with guidance of roughly $78 billion for the next quarter, reported FinancialContent.

- Nvidia has signaled roughly $1 trillion in confirmed AI chip demand visibility through 2027, per company guidance cited by Intellectia.

Wall Street is layering on louder bull calls. Bank of America’s Vivek Arya bumped his Nvidia price target to $300 from $275 earlier this year, citing an “agentic AI inflection point” and overwhelming demand for next-generation Blackwell and Vera Rubin systems, per FinancialContent.

ServiceNow’s McDermott sketched out the customer side of the trade. He projected his company would roughly double subscription revenue from about $16 billion this year to $30 billion by 2030, per Fortune. That growth target only pencils out if his customers really do replace much of their existing software with the agentic systems Huang’s chips run.

How the compute boom flows through your portfolio

Here is where I have to do some honest math against my own portfolio.

If you own a basic S&P 500index fund through a 401(k) or a Roth IRA, Nvidia is now roughly seven cents of every dollar inside that fund. Add Microsoft, Apple (AAPL) and Alphabet, and you are above one quarter of your savings sitting in four AI-exposed names. The AI buildout is no longer a trade you opt into. It is a trade you opt out of, on purpose, with a different fund choice.

That changes how I think about diversification. Owning the S&P 500 used to be a bet on the broad U.S. economy. Today, it is also a concentrated bet on the success of a handful of agentic AI roadmaps shipping over the next 24 months.

For people closer to retirement, the concentration risk is a live decision, not a thought experiment. Trimming the AI mega-caps and adding equal-weight S&P 500 funds, dividend payers, or short-duration Treasuries can lower the portfolio’s beta to a single industry roadmap without giving up the upside entirely.

The forward question is not whether AI compute demand keeps rising. Huang’s 1,000% figure, the hyperscaler capex commitments, and analyst calls like Arya’s all point the same direction. The harder question is who else cashes the check. Memory makers like Micron (MU), foundries like Taiwan Semiconductor (TSM), networkers like Broadcom (AVGO), and the power and cooling suppliers behind the curtain all have a claim.

Earnings season for the next leg of that story is already on the calendar. Nvidia’s next print is due later this month, and a wave of AI-adjacent reports follows right behind it. For retail investors, that stretch is the next real test, and it will tell us whether the compute boom Huang described on Tuesday is still bending the chart up and to the right.